Harms Framework

This draws inspiration from other attempts to define harmful speech, ranging from legal exceptions to free speech protections (e.g. public endangerment oft-cited "shouting fire in a crowded theater" or inciting "imminent lawless action"4) to the Dangerous Speech Project, which identifies a subset of hate speech that has greater potential to incite violence.5 Notably, the question of "why" is absent from this framework, as intent is notoriously difficult to assess online. Search results are filtered through an opaque algorithm ranking, making coordinated manipulation attempts even more difficult to spot.

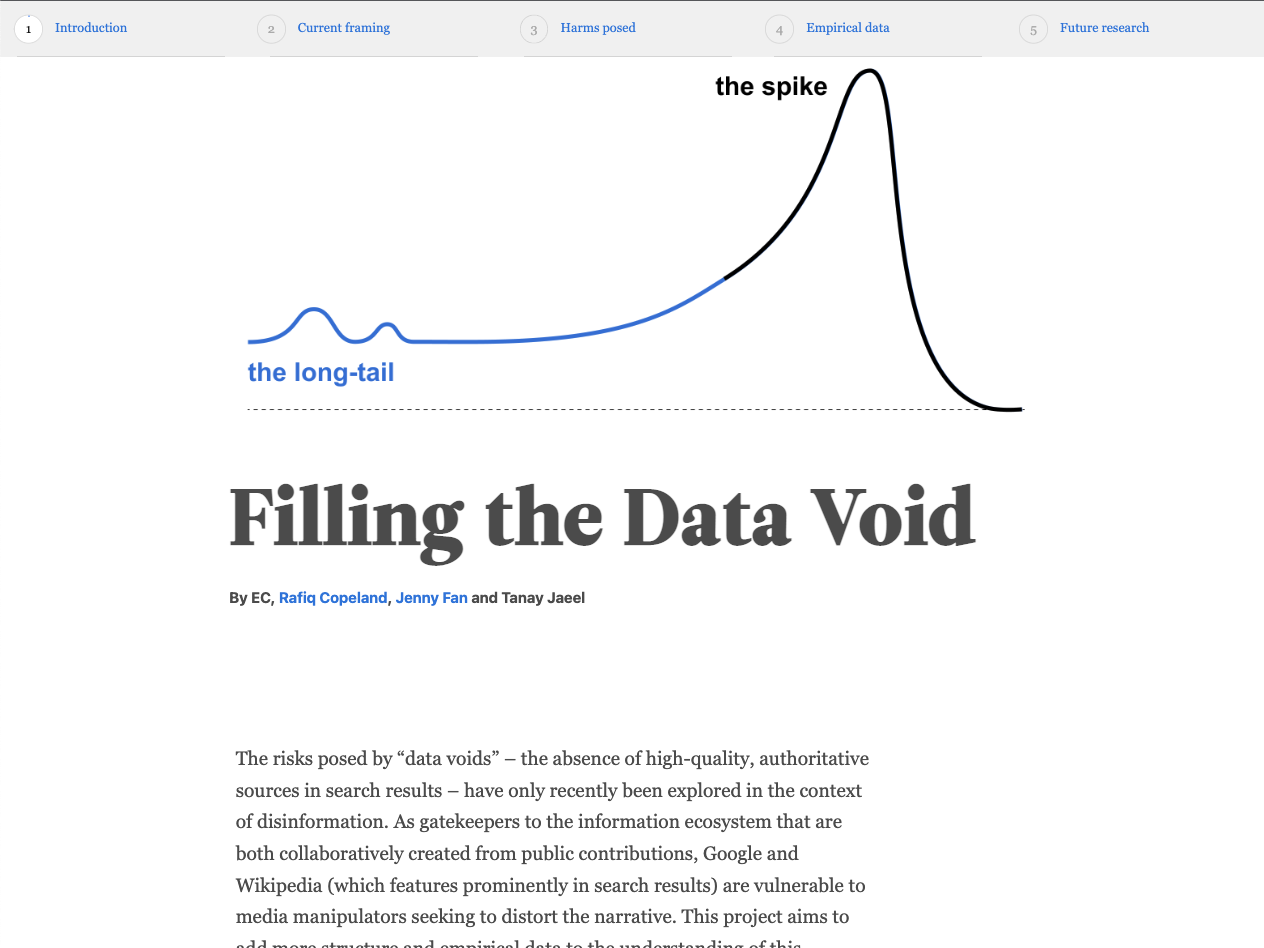

Data void lifecycles

We plotted the peak week of search activity for each term (in red) and layered in the specific times that authoritative media articles entered the discussion (in blue) and specific times that edits were made to relevant Wikipedia articles (in yellow). With this data we have a timeline of when credible news sources posted about a term, relative to when searches for that data void were spiking.